AI Box vs Traditional IPC

AI Box vs Traditional IPC: Architecting Lean Edge Inference Networks

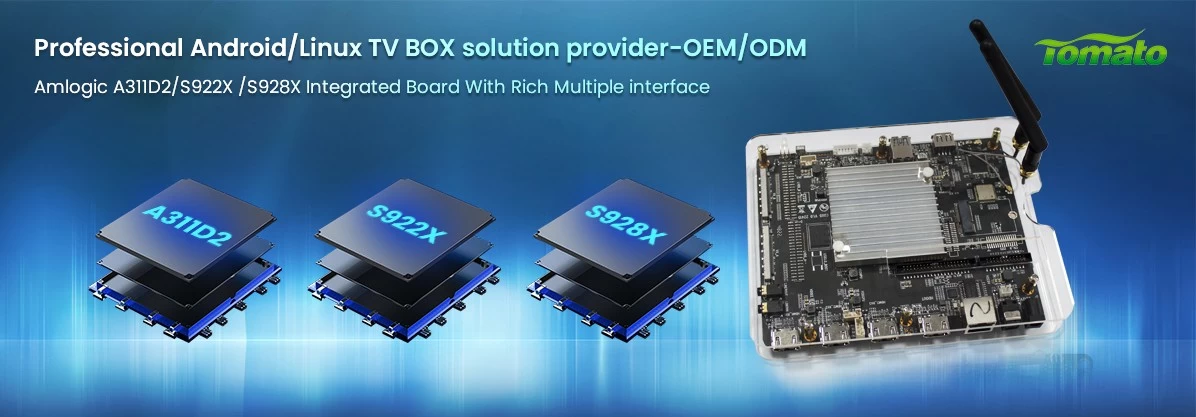

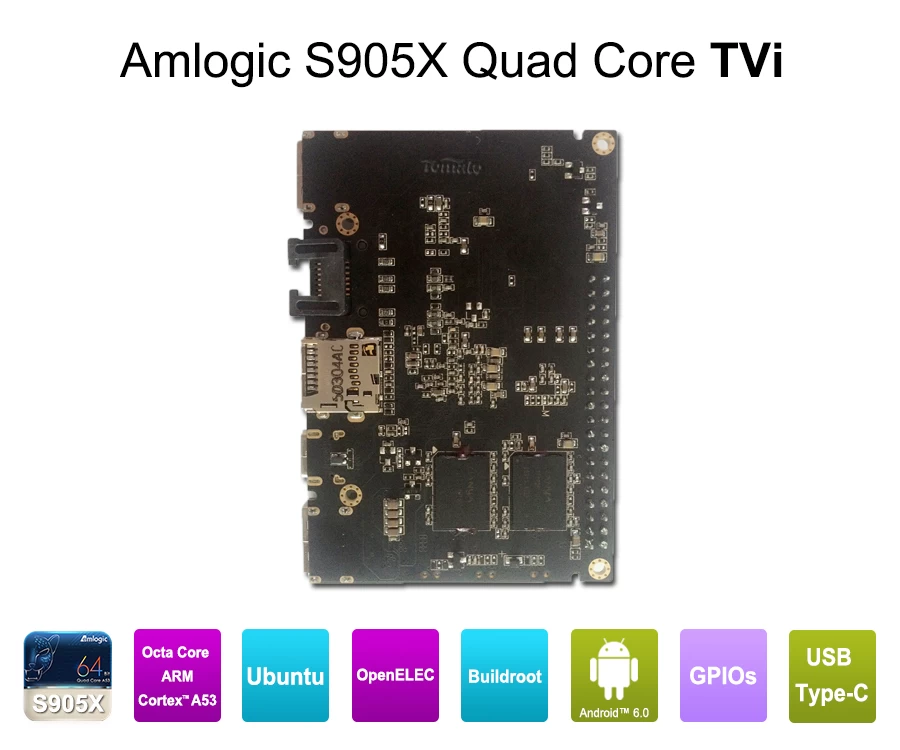

The integration of 5 to 6 TOPS (Tera Operations Per Second) Neural Processing Units (NPUs) directly into ARM-based SoCs—such as the Amlogic A311D2 or the Rockchip RK3588—has forced a hard pivot in commercial system integration. For machine vision, digital signage analytics, and local IoT processing, the reliance on high-wattage, x86-based Industrial PCs (IPCs) is becoming an architectural liability. System integrators must now evaluate the processing efficiency, physical footprint, and lifecycle management capabilities of the dedicated Edge AI Box against legacy IPC frameworks.

While a traditional IPC utilizes brute-force CPU and GPU combinations to execute tasks, an AI Box routes machine learning workloads through a dedicated NPU. This fundamental difference dictates the hardware’s thermal envelope, deployment flexibility, and ultimately, the unit economics of a scaled network.

The Processing Paradigm: x86 Brute Force vs. ARM-Based NPU Efficiency

Traditional IPCs rely heavily on x86 architectures running comprehensive operating systems. While powerful, this approach is inherently inefficient for single-function, continuous-stream inference tasks. Processing high-definition video inputs for object recognition on an IPC requires substantial CPU cycles and active cooling, driving up both power consumption and hardware degradation rates.

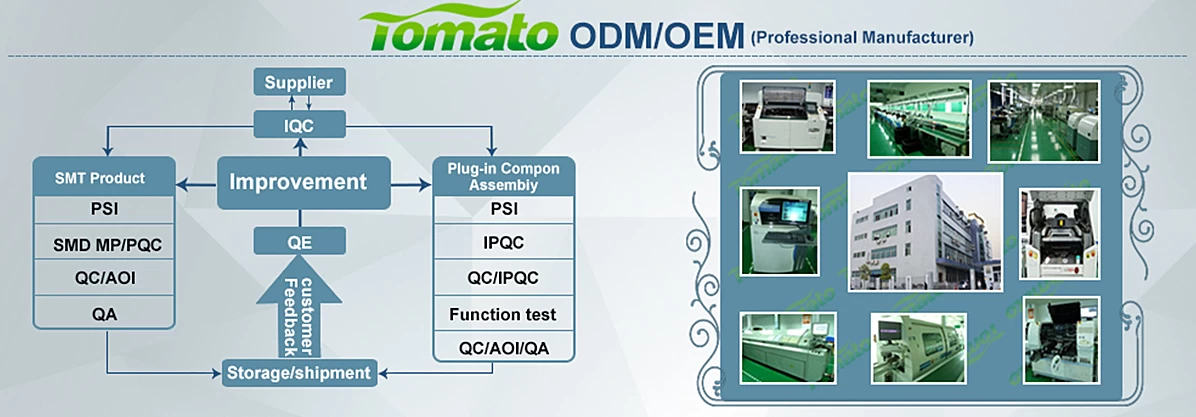

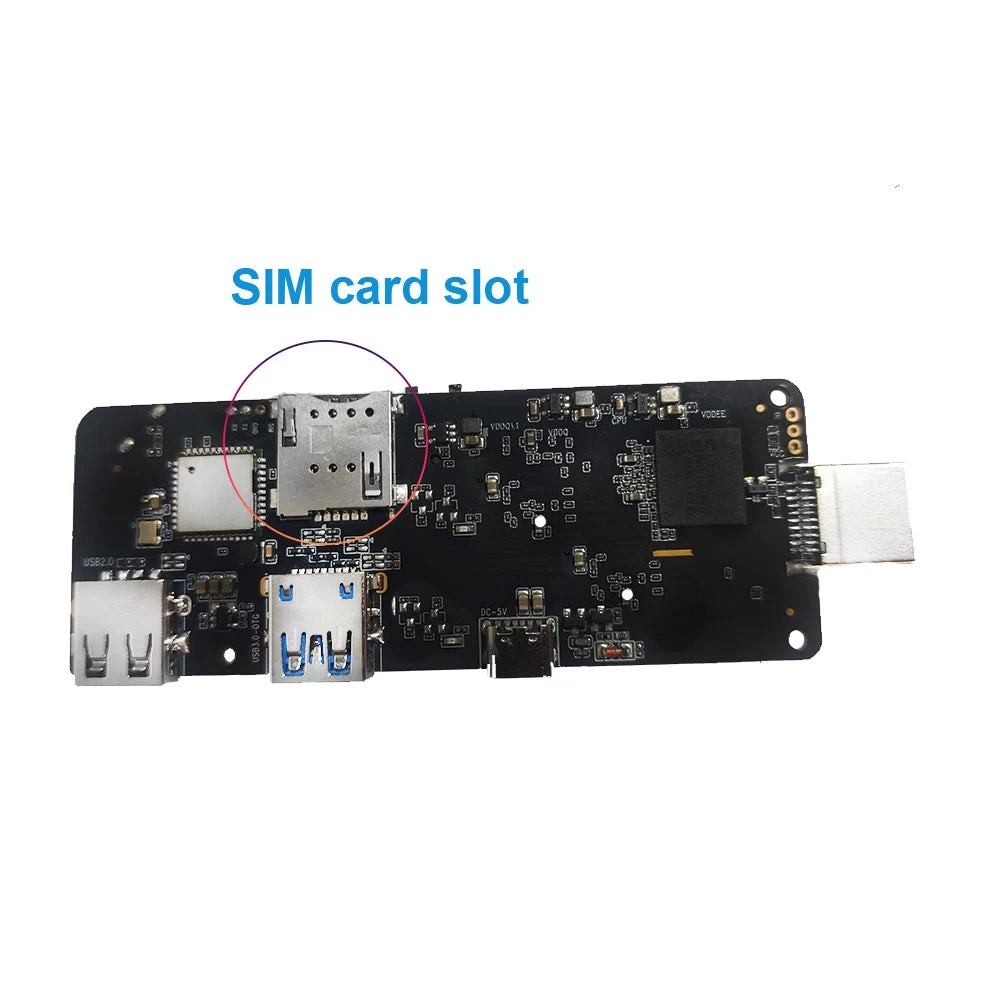

Conversely, a commercial-grade AI Box is designed around task-specific silicon. Hardware-accelerated decoding (such as native AV1 codec support) handles video ingestion, while the integrated NPU executes the neural network models. This segregates the computational load, allowing an ARM-based AI Box to operate at a fraction of the power draw of an equivalent IPC. However, unlocking this efficiency requires precise hardware alignment. Generic, off-the-shelf Android boxes lack the I/O configurations required for industrial sensors. To bridge this gap, SZTomato utilizes rigorous PCBA hardware modification, re-engineering the motherboard layout to integrate necessary RS232/RS485 ports, dual Gigabit LAN, or specialized GPIO interfaces directly into the localized NPU architecture.

Physical Deployment Constraints and Thermal Reality

Commercial environments dictate strict physical constraints. Deploying hardware inside a retail kiosk, a sealed outdoor digital signage enclosure, or a factory floor panel means operating in environments with zero ambient airflow.

IPCs often require active fans or massive, heavy extruded chassis to mitigate x86 heat generation. Moving mechanical parts introduces immediate points of failure in dust-heavy or high-vibration settings. An AI Box operates with a significantly lower Thermal Design Power (TDP). Despite the lower heat generation, continuous 24/7 NPU utilization still requires professional thermal management. SZTomato engineers specialized cooling solutions—integrating custom-milled aluminum heat sinks, advanced thermal compounds, and strict firmware-level thermal throttling—ensuring the AI Box maintains peak inference performance without the necessity of active cooling fans.

Firmware Integrity: Overcoming Bloatware via Kernel Optimization

Hardware dictates capability; firmware dictates stability. A primary failure point for traditional IPC deployments is the reliance on generic operating systems (standard Windows IoT or unoptimized Linux distributions). These operating systems run hundreds of background processes irrelevant to the integrator’s specific machine learning application, consuming valuable RAM and increasing the attack surface.

Deploying an AI Box successfully requires deep-level software stripping. SZTomato's engineering framework prioritizes rigorous Linux/Android kernel optimization. By removing consumer-facing Android modules and optimizing the kernel scheduler for continuous NPU utilization, system resources are dedicated entirely to the primary application. Furthermore, we develop custom UI/UX firmware, ensuring the hardware boots directly into a locked-down, client-specific interface. This prevents unauthorized third-party application installations and guarantees operational consistency across the deployment.

Lifecycle Management and Secure Fleet Integration

Scaling an edge network from ten units to ten thousand units requires centralized control. IPC networks often rely on fragmented, third-party remote desktop protocols for maintenance, creating security vulnerabilities and inconsistent patch management.

A dedicated OEM/ODM partnership centralizes fleet management. SZTomato provides comprehensive SDK/API integration, allowing a client’s proprietary software stack to communicate directly with the AI Box's hardware sensors and NPU. For commercial media deployments, robust HDCP encryption protocols are integrated at the firmware level to secure proprietary AV streams. Crucially, lifecycle maintenance is handled via customized, closed-loop OTA (Over-The-Air) update systems. This ensures that kernel patches, updated ML models, and security revisions are pushed securely to the entire hardware fleet without relying on public servers or manual technician intervention.

Strategic Procurement for B2B Integrators

The transition from traditional IPCs to dedicated AI Boxes is not merely a hardware swap; it is a shift toward application-specific engineering. For B2B procurement managers and system integrators, purchasing generic retail hardware invariably leads to thermal failure, I/O bottlenecks, and software bloat.

Scaling localized edge inference requires a vendor capable of full-stack intervention. From initial PCBA layout design and specialized thermal engineering to final kernel optimization and secure OTA deployment, SZTomato provides the OEM/ODM framework necessary to architect reliable, commercial-grade AI Box networks.