Is it worth buying an AI Box?

Is it Worth Buying an AI Box? Architecting High-Yield Edge Deployments

Cloud latency renders real-time computer vision practically useless in industrial machine vision and responsive digital signage environments. The reliance on centralized server inferencing incurs unsustainable bandwidth costs and introduces critical vulnerabilities during network degradation. The hardware solution engineered to address this structural failure is the AI Box.

However, upgrading a deployment infrastructure from standard Android TV boxes to specialized AI Box hardware requires a significant capital expenditure. For B2B integrators and procurement officers, determining if an AI Box is "worth it" requires a strict evaluation of localized processing demands versus hardware costs.

Evaluating the Latency and Bandwidth Bottleneck

Standard set-top boxes and commercial mini PCs utilize general-purpose CPUs and GPUs. These architectures are highly inefficient at executing the dense matrix multiplications required by machine learning frameworks like TensorFlow or PyTorch. When a standard device captures visual data—such as audience analytics at a retail kiosk—it must compress the video, transmit it to a cloud server, await processing, and receive the instruction payload back.

An AI Box eliminates this cycle. By integrating a dedicated Neural Processing Unit (NPU) directly onto the System on a Chip (SoC), the device executes inference models locally. If your deployment requires sub-10 millisecond response times or operates in environments with restricted uplink bandwidth, the architectural shift to an AI Box is a mandatory infrastructural requirement, not an optional upgrade.

Matching NPU Capabilities to Workload Requirements

The primary metric for evaluating an AI Box is TOPS (Tera Operations Per Second). The ROI of the device scales directly with your ability to utilize its compute capacity without triggering thermal throttling.

-

Light Workloads (1-2 TOPS): Sufficient for basic audio recognition, wake-word detection, and simple biometric access control.

-

Heavy Workloads (5+ TOPS): Required for concurrent multi-stream video analysis. Hardware utilizing chipsets like the Rockchip RK3588 delivers up to 6 TOPS, enabling simultaneous object detection, facial recognition, and vehicle counting directly at the edge.

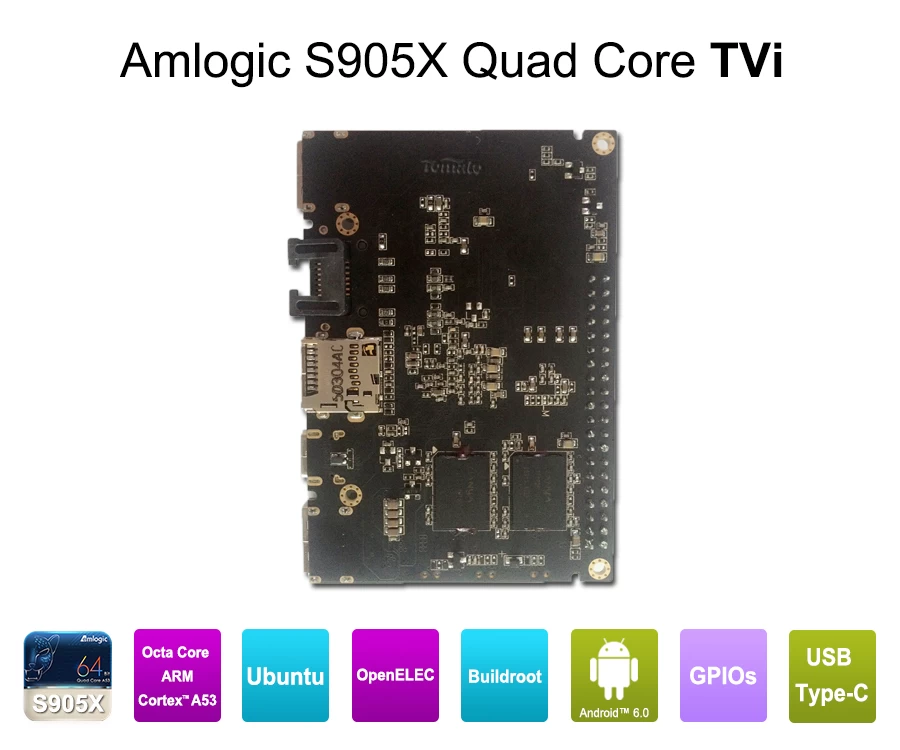

If your application does not require computer vision or complex predictive algorithms, deploying a high-TOPS AI Box results in stranded compute capacity and a negative ROI. A standard Amlogic-based streaming media player is sufficient for passive media decoding.

Hardware Economics: The TCO of Localized Inferencing

While the initial unit cost of an AI Box is higher than legacy hardware, the Total Cost of Ownership (TCO) often flips within the first 12 months of enterprise deployment due to three factors:

-

Cloud API Reductions: Processing data locally eliminates recurring fees associated with cloud-based machine learning APIs.

-

Server Infrastructure Avoidance: Edge processing decentralizes the compute load, preventing the need for costly centralized server upgrades as your digital signage or IoT network scales.

-

Data Privacy Compliance: By processing video feeds locally and instantly discarding the raw footage, AI Boxes structurally comply with strict data privacy frameworks (e.g., GDPR, CCPA), mitigating legal risk and compliance overhead.

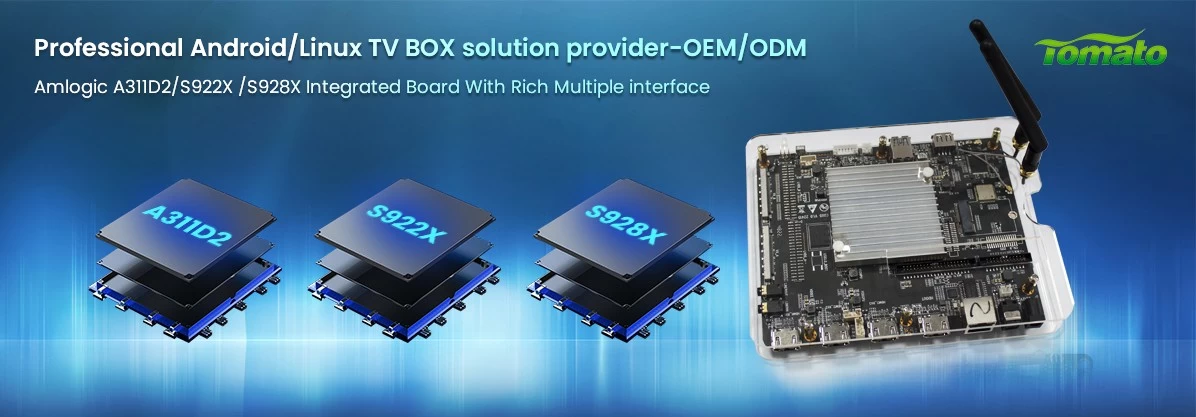

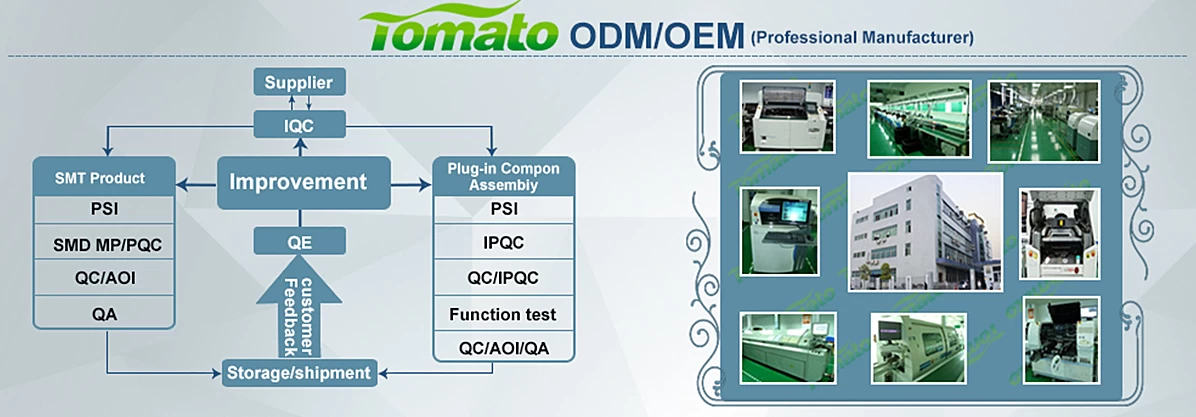

The Necessity of PCBA and Firmware Engineering

Purchasing consumer-grade AI hardware for B2B deployment guarantees failure. Industrial applications demand rigorous OEM/ODM customization.

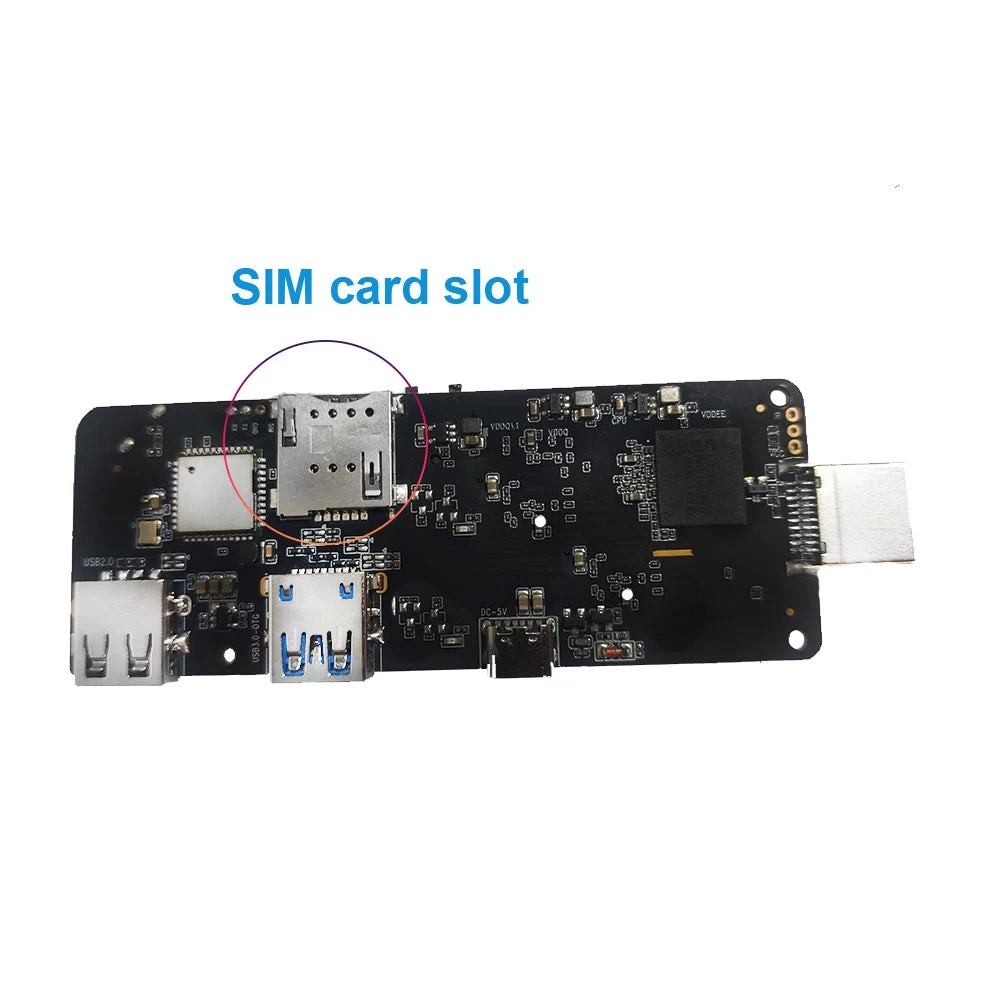

An off-the-shelf AI Box will not feature the specific I/O configurations (RS232, dual gigabit LAN, GPIO) required for industrial integration. Furthermore, sustained NPU utilization generates acute thermal loads. A viable AI Box requires custom Printed Circuit Board Assembly (PCBA) design combined with extruded aluminum enclosures and strategic heat sink mounting to ensure 24/7 continuous operation. Finally, enterprise integrators require deep firmware engineering—including bootloader locking and custom Android Open Source Project (AOSP) compilation—to lock down the system and integrate proprietary SDKs.

Strategic Recommendation

The decision to procure an AI Box is entirely dependent on your application layer. If your network passively displays content, standard hardware suffices. If your infrastructure relies on real-time data ingestion, local machine learning execution, and zero-latency mechanical or digital responses, the AI Box is the only viable architectural choice.

Evaluate your current network bottlenecks, calculate your monthly cloud inferencing expenditures, and consult with a dedicated OEM/ODM hardware engineering team to specify the exact NPU requirements and PCBA modifications necessary for your next deployment.