Are AI Box worth it?

Are AI Boxes Worth It? A Technical ROI Analysis for B2B Integrators

Relying on cloud infrastructure for real-time video analytics introduces two critical failure points for commercial deployments: unacceptable API latency and exorbitant recurring bandwidth costs. When a retail digital signage network or an industrial surveillance grid requires continuous computer vision for object recognition, transmitting raw 4K video feeds to a centralized server is a fundamental architectural misstep.

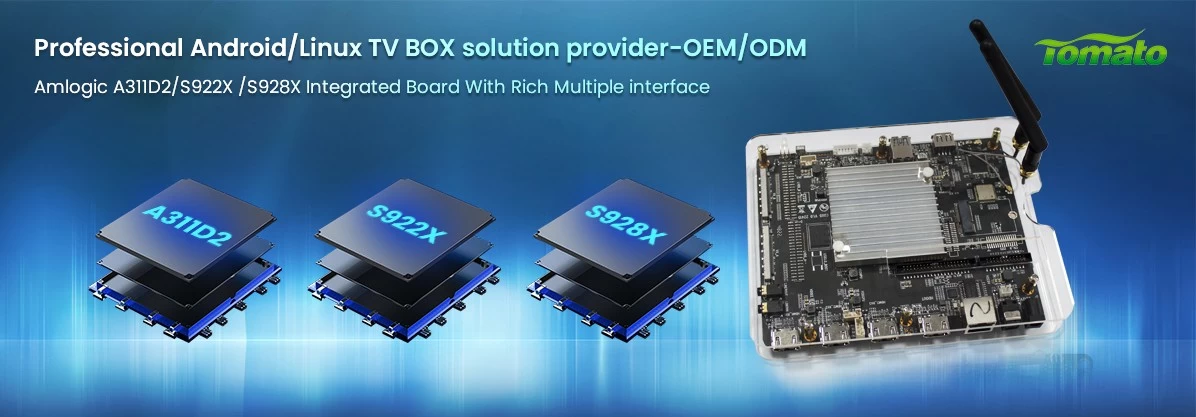

This bottleneck has accelerated the adoption of the AI Box—a dedicated hardware appliance designed to process machine learning workloads directly at the edge. However, for B2B procurement officers and system integrators, transitioning from standard media players to NPU-equipped hardware requires a significant upfront capital expenditure. Determining if an AI Box is worth the investment demands a strict evaluation of localized processing capabilities, thermal tolerances, and the resulting Total Cost of Ownership (TCO).

The Bandwidth Problem: Cloud Inference vs. Edge Computing

Standard Android TV Boxes and digital signage players act as thin clients; they pull data from a server and display it. When you introduce computer vision requirements—such as audience demographic analysis or restricted-area monitoring—standard SOCs (System on Chips) without dedicated Neural Processing Units (NPUs) choke on the computational load.

Attempting to bypass this hardware limitation by offloading the inference to cloud providers (AWS, Google Cloud) creates a severe financial drain. An enterprise network processing hundreds of concurrent video streams incurs massive egress fees. Deploying an AI Box resolves this by executing the machine learning models (TensorFlow Lite, PyTorch, ONNX) locally. The AI Box processes the visual data, extracts the metadata (e.g., "Male, 35-45, looked at display for 12 seconds"), and sends only a few kilobytes of text data back to the central server. The reduction in bandwidth costs often justifies the hardware upgrade within the first operational year.

Evaluating NPU Benchmarks and Hardware Constraints

The defining characteristic of an AI Box is the NPU, measured in TOPS (Trillions of Operations Per Second). However, raw TOPS metrics are frequently manipulated in consumer spec sheets. For industrial applications, sustained performance under load is the only metric that matters.

-

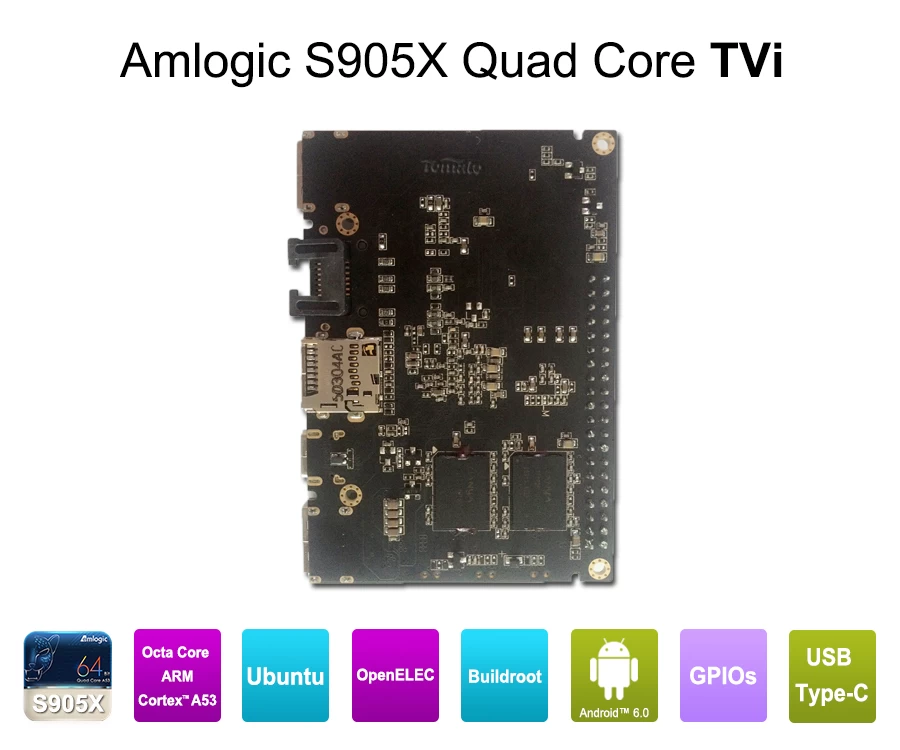

Silicon Architecture: Processors like the Rockchip RK3588 dominate the current AI Box landscape, offering up to 6.0 TOPS of INT8 computing power alongside an octa-core CPU. This allows the device to decode multiple concurrent video streams while running complex object detection algorithms simultaneously.

-

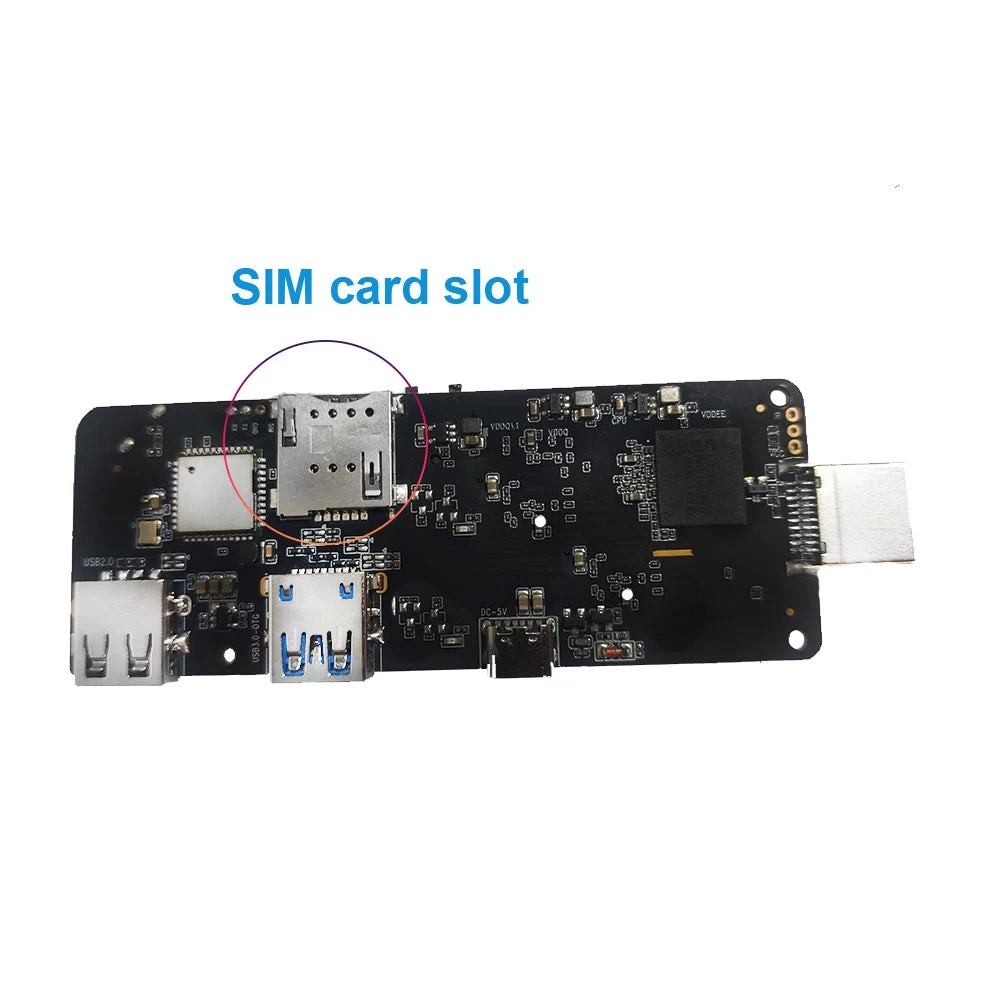

Memory Bandwidth: AI workloads are memory-intensive. An AI Box equipped with standard DDR3 or slow eMMC storage will bottleneck the NPU. B2B deployments require LPDDR4x or LPDDR5 RAM (minimum 8GB for complex vision models) and high-endurance NVMe or industrial eMMC 5.1 storage to handle the aggressive read/write cycles of localized data caching.

-

Thermal Design Power (TDP): NPUs generate substantial heat. A standard plastic enclosure will cause thermal throttling within hours, dropping inference accuracy and rendering the device useless. A commercial AI Box requires a custom PCBA layout with the NPU physically isolated from the PMIC (Power Management IC), housed in a finned, extruded aluminum chassis acting as a passive heatsink.

Firmware Engineering: The Overlooked Integration Layer

An AI Box is functionally inert without an optimized firmware layer bridging the hardware and the client's proprietary software. Off-the-shelf consumer Android builds (AOSP) restrict the deep system access necessary for edge computing integration.

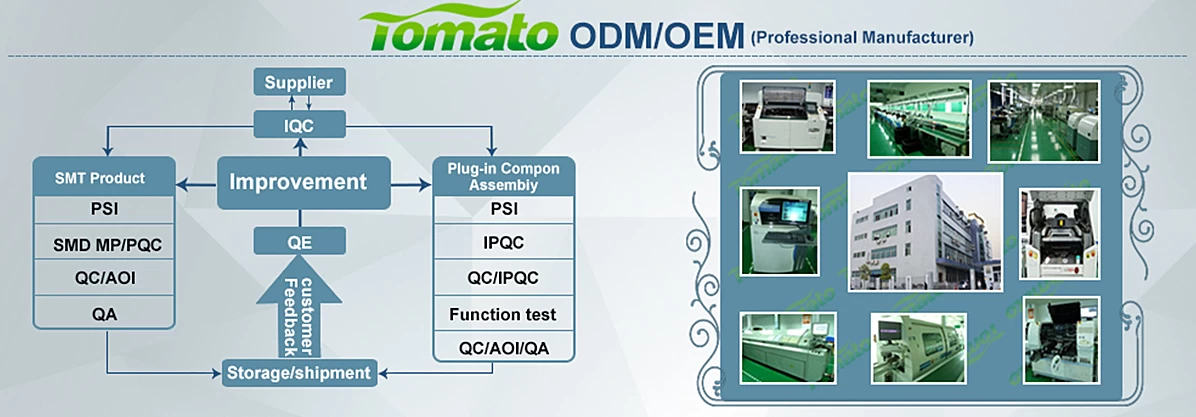

Deploying an AI Box at scale requires customized firmware engineering:

-

Root-Level Access and API Hooks: Integrators require direct API access to the NPU to allocate specific hardware threads to their proprietary models.

-

OS Flexibility: While many digital signage applications run on Android, heavy AI inferencing often requires the stability and package management of Linux (Ubuntu/Debian). A capable hardware partner must offer dual-boot capabilities or custom Board Support Packages (BSP) for multiple operating systems.

-

Remote Management: Managing a fleet of edge computing devices requires secure Over-The-Air (OTA) update infrastructure and watchdog timers to automatically reboot the hardware in the event of a localized application crash.

Cost-Benefit Analysis: The 18-Month TCO

Are AI Boxes worth the premium? The answer lies in analyzing the 18-month TCO. While an AI Box may cost 200% to 300% more upfront than a standard digital signage player, the operational savings are immediate.

By eliminating the recurring costs of high-bandwidth commercial ISP connections, reducing cloud computing API calls by 95%, and utilizing industrial-grade components that lower the RMA failure rate, the break-even point for a localized edge computing network typically occurs between month 12 and month 18 of deployment.

For B2B integrators transitioning into smart retail, autonomous security, or industrial IoT, the AI Box is not a discretionary upgrade; it is a mandatory architectural requirement. To evaluate the specific NPU requirements, thermal designs, and custom firmware configurations needed for your edge computing deployment, consult with a specialized OEM manufacturer to architect a solution that aligns with your project constraints.